Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Nvidia’s Robin chips turn AI into cheap infrastructure. That’s why open intelligence marketplaces like Bittensor are starting to matter.

Nvidia used CES 2026 to signal a major change in how artificial intelligence works. The company hasn’t moved forward with consumer GPUs. Instead, it introduced Rubin, a rack-scale AI computing platform designed to make large-scale inference faster, cheaper and more efficient.

Sponsored

Sponsored

Nvidia’s revelation at CES was clear that it was no longer for sale individual slices. It sells artificial intelligence factories.

Robin is a platform Nvidia’s next generation data centers That follows Blackwell. It combines new GPUs, high-bandwidth HBM4 memory, dedicated processors and ultra-fast interconnects in a tightly integrated system.

Unlike previous generations, Rubin treats the entire rack as a single computing unit. This design reduces data movement, improves memory access, and reduces the cost of managing large models.

As a result, this allows cloud providers and enterprises to run long-context, inference-heavy AI at a much lower cost per code.

This is important because modern AI doesn’t seem heavy anymore Chatbot one. They rely more and more on many small models, agents and specialized services that communicate with each other in real time.

By making inference cheaper and more scalable, Rubin enables a new kind of AI economy. Developers can deploy thousands of finely tuned models instead of one huge model.

Sponsored

Sponsored

Organizations can run agent-based systems that use multiple models for different tasks.

However, this creates a new problem. As AI becomes standard and abundant, someone must decide which model handles each request. Someone must measure performance, manage trust and direct payments.

Cloud platforms can host models, but they do not provide neutral markets for them.

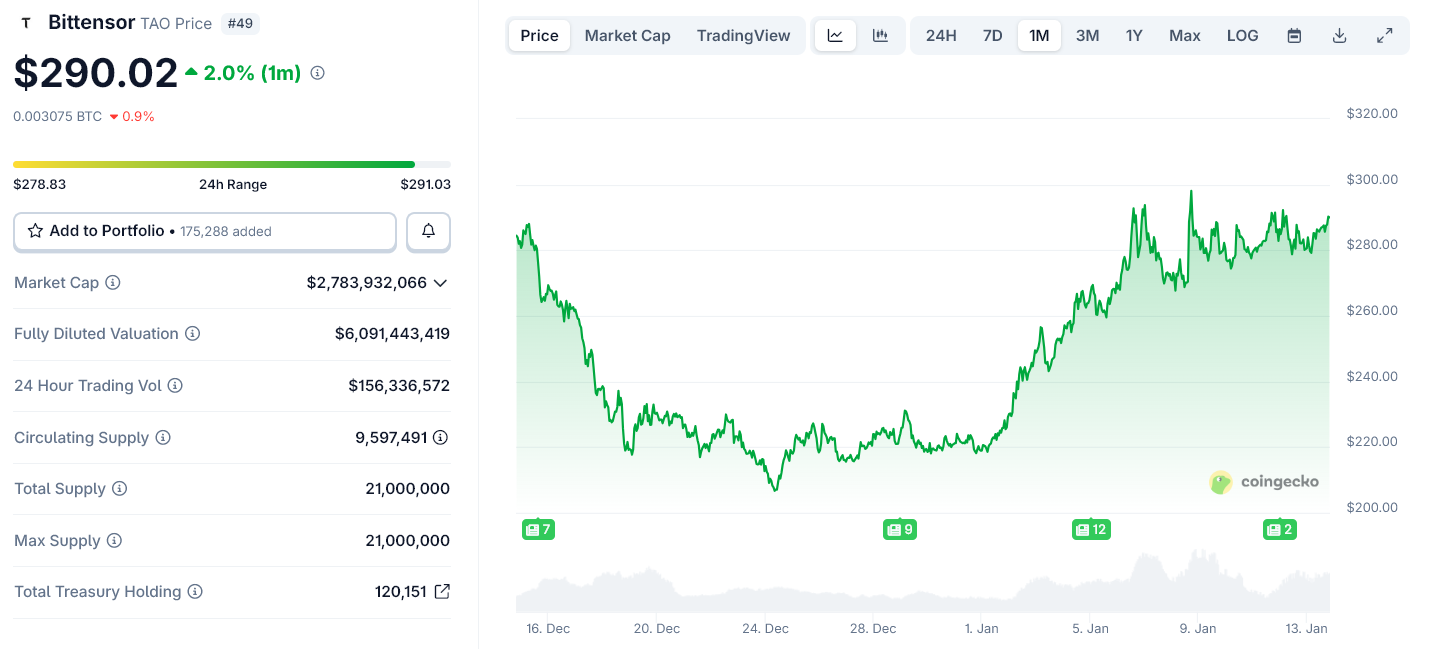

Request sor I don’t sell computers. It operates a decentralized network where AI models compete to deliver useful results. The network ranks those models that use performance data on the chain and pays for them in its native token, TAO.

Each Bittensor subnet acts as a marketplace for a specific type of intelligence, such as text generation, image processing or data analysis. Models who do well earn more. Models that do little lose their influence.

Sponsored

Sponsored

This structure becomes more valuable as the number of models increases.

Rubin is not competing with Pittensor. This does Bettensor economic model It works on a large scale.

As Nvidia lowers the cost of running AI, more developers and companies can implement specialized models. This increases the need for an agnostic system to curate, select and push those models into clouds and enterprises.

Bittensor provides that coordination layer. This film turns a stream of AI services into an open and competitive market.

Nvidia controls the physical layer of AI: chips, memory and network. Rubin strengthens this control by making AI cheaper and faster to operate.

Sponsored

Sponsored

The Bittensor works a layer on it. It deals with the economy of intelligence by identifying patterns that are used and rewarded.

As AI moves towards swarms of agents and standardized systems, it becomes more difficult to centralize this economic layer.

Rubin’s role later in 2026 will expand AI capabilities in data centers and clouds. This will drive growth in the number of models and agents competing for real workloads.

Open networks like Bittensor will benefit from this change. It does not replace the Nvidia infrastructure. They give him a market.

In this sense, Rubin does not undermine decentralized AI. This gives them something to organize.